TL;DR

- AI Datacenter Parks are becoming India’s backbone for high-density, AI-first compute demand now outpaces traditional datacenter designs.

- Intelligent AI Infrastructure requires rethinking power, thermals, GPU fabrics, and multi-zone resilience at architectural scale.

- Enterprises need AI-Optimized Datacenter Parks with automated failover, AI-based risk assessment, and DRaaS-native design.

- Smart datacenter ecosystems not isolated racks will define the next decade of enterprise AI acceleration in India.

- RackBank’s AI Datacenters show how integrated GigaCampus + Edge + Core architectures deliver real-world scalability for AI workloads.

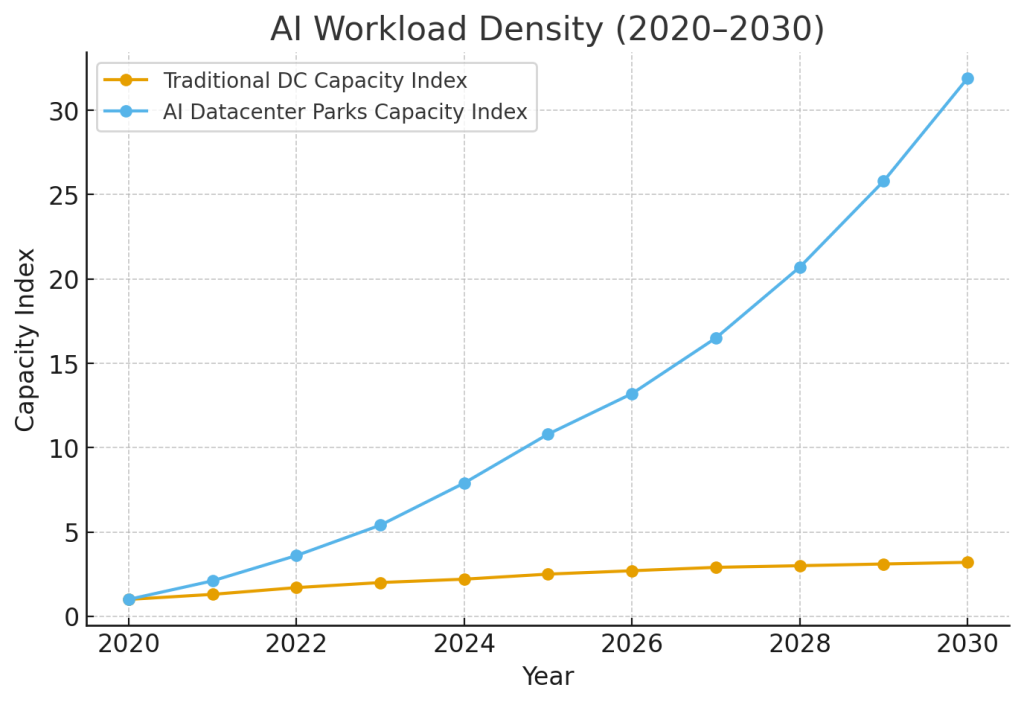

India is entering an inflection point where AI Datacenter Parks are becoming foundational to national competitiveness. Over the past few years, I’ve watched enterprise AI workloads grow not linearly, but exponentially. Models that required 2–5 GPUs in 2020 now routinely require 200–800 in production training pipelines. This shift is redefining what future-ready datacenters must provide.

Traditional facilities optimized for virtualization, cloud hosting, or basic enterprise compute simply cannot sustain the power densities, thermal profiles, interconnect speeds, or resilience demands of modern AI systems. Here’s what I’m seeing across India’s evolving digital infrastructure landscape and why the next leap will come from Smart, AI-Optimized Datacenter Parks.

The Shift: Why AI Datacenter Parks Are Replacing Traditional Architectures

India’s AI economy is projected to reach USD 17B by 2027, driven by generative AI startups, BFSI automation, mobility AI, and public-sector digitization. But this growth exposes a structural gap: enterprises need AI Innovation Hubs that are GPU-dense, energy-efficient, and operationally autonomous.

AI Demands a Different Kind of Infrastructure

- High TDP GPU clusters require 3–5× the cooling intensity of CPU-heavy racks.

- AI fabrics (NVLink, Infiniband, 400G/800G Ethernet) collapse under non-optimized spine-leaf designs.

- AI-powered backup and restore is becoming standard for enterprise AI pipelines.

- Disaster Recovery for AI workloads now requires low-latency multi-zone replication, not asynchronous backups.

This is why RackBank AI Datacenters are designed as integrated parks, not siloed buildings.

The Architecture of Intelligent AI Infrastructure

Multi-Zone Datacenter Deployment for Extreme Availability

Enterprises are shifting from N+1 logic to N+N multi-zone architecture, where redundancy is geographic, not just hardware-based.

This enables:

- Automated failover systems

- Real-time DRaaS

- AI-based risk assessment

- Business continuity planning with AI

In RackBank’s GigaCampus deployments, airflow, energy, route diversity, and transport fabrics are engineered with AI-first workloads in mind, not retrofitted after deployment.

Datacenter Resilience Technologies

AI Datacenter Parks integrate:

- Predictive failure models (powered by operational AI)

- Liquid and hybrid cooling with 50%+ energy efficiency gains

- Redundant infrastructure India-compliant power grids

- AI-driven capacity orchestration

The Rise of AI-Optimized Datacenter Parks

From Facilities to Intelligent Ecosystems

A smart datacenter is no longer a building. It is a living system that continuously optimizes power, interconnects, workload placement, and thermal behavior.

Key Characteristics

- AI-ready infrastructure for India’s digital transformation

- Dedicated zones for training, inference, edge, and archival workloads

- Automated environmental and asset telemetry

- Predictive maintenance and real-time rack health scoring

- GPU cluster auto-rightsizing based on demand patterns

This is transforming how both enterprises and startups scale.

A fintech startup can spin up a 512-GPU cluster for large-scale risk modeling and expand to 1,024 GPUs within 24 hours without architectural rewiring.

AI Datacenter Parks: Igniting India’s Next Technological Revolution

India doesn’t merely need capacity, it needs agility at national scale. AI Datacenter Parks allow:

- Unified GigaCampus + Edge + Core architecture

- Low-latency access for AI inference workloads

- High-bandwidth, high-availability architecture for training jobs

- Energy-efficient smart datacenters for AI workloads

- Substantially reduced cost per inference due to optimized GPU sharing

These ecosystems will form the foundation for the next generation of generative AI platforms built in India.

Growth in AI Workload Density vs. Traditional Datacenters

FAQs

They deliver GPU density, multi-zone resilience, and AI-optimized interconnects that traditional facilities lack, allowing enterprises to deploy AI workloads at national scale.

Startups gain access to on-demand GPU clusters, reduced capex, automated failover systems, and intelligent workload placement, allowing them to scale like global AI companies.

Through N+N multi-zone architecture, DRaaS-native design, AI-powered backup and restore, and high-availability cluster networks built specifically for enterprise AI.

Liquid cooling, 800G interconnects, real-time telemetry, AI-driven risk assessment, and unified GigaCampus-edge-core orchestration.

By reducing thermal throttling, improving power utilization effectiveness (PUE), and enabling higher sustained GPU performance during training and inference.

Through intelligent workload scheduling, container orchestration tuned for GPU fabrics, and AI-based resource optimization engines deployed across the datacenter park.

Conclusion

AI Datacenter Parks will be the cornerstone of India’s digital future. As AI models grow, the infrastructure beneath them must evolve even faster. At RackBank, our mission is to build future-ready datacenters, engineered for AI innovation, not adapted to it.

From intelligent AI infrastructure to enterprise-scale resilience, the next decade belongs to organizations that treat datacenters as strategic assets, not passive back-end utilities. And the transformation has already begun.