India is in the middle of a compute boom that most infrastructure providers are not equipped to handle. The problem is not a lack of datacenters. The problem is that most of those datacenters were never designed for AI.

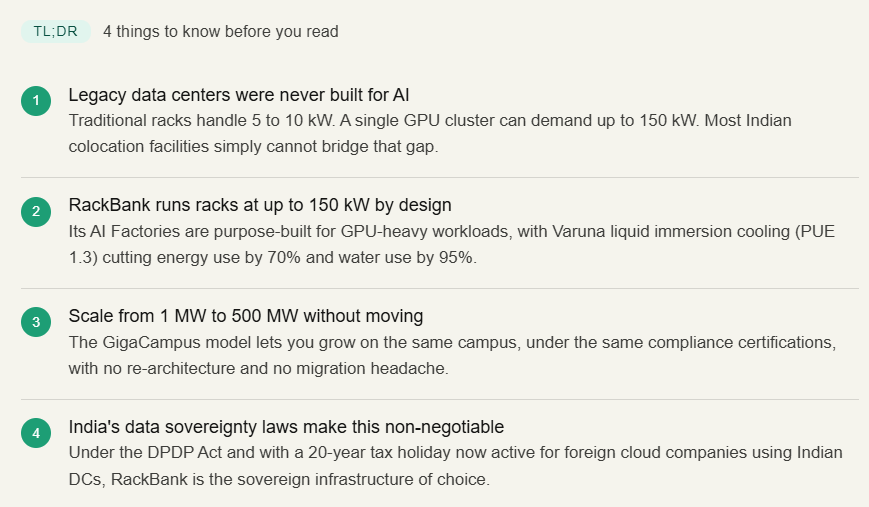

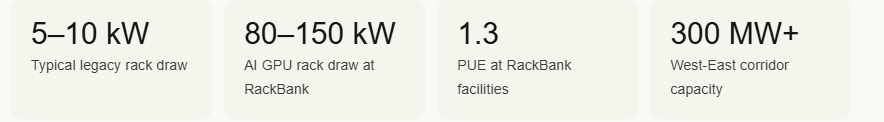

AI training workloads demand rack power densities that conventional facilities treat as edge cases. A standard enterprise server rack draws somewhere between 5 and 10 kilowatts. A single GPU-dense rack running H100 clusters can pull 80 to 150 kilowatts. That is not an incremental difference. It is a different class of infrastructure entirely.

This gap is forcing a fundamental rethink of what colocation actually means. The shift is away from space-as-a-service and toward power-as-infrastructure. RackBank was built to operate in that second world.

The infrastructure mismatch no one talks about

When enterprises began moving AI workloads into production at scale, a quiet problem surfaced. The GPU clusters were ready. The models were ready. The data pipelines were ready. But the facility could not support the power draw.

Older Tier III and Tier IV facilities in India were designed around 5 to 15 kW per rack. AI training rigs need five to twenty times that. Floor space is rarely the constraint. Power capacity, cooling architecture, and thermal density are.

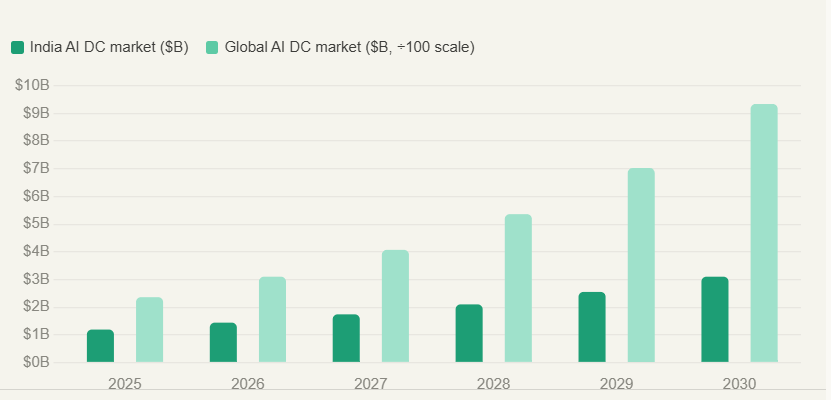

The global AI datacenter market was valued at $236 billion in 2025 and is projected to reach $933 billion by 2030, growing at a CAGR of 31.6%. India’s share of that is expected to grow from $1.19 billion to $3.1 billion in the same window. The infrastructure race has started. Most existing colocation providers are not positioned to run it.

What AI-ready colocation actually requires

The term “AI-ready datacenter” has become marketing shorthand. Here is what it actually means in physical terms:

| Requirement | Legacy colocation | RackBank AI colocation |

|---|---|---|

| Rack power density | 5–15 kW per rack | Up to 150–200 kW per rack |

| Liquid Cooling method | Air cooling (CRAC/CRAH) | Direct-to-chip liquid |

| GPU interconnect | Standard Ethernet | InfiniBand for GPU-to-GPU clustering |

| PUE (efficiency) | 1.6–2.0 typical | 1.3 guaranteed |

| Energy source | Grid (mixed) | 100% renewable, carbon-neutral |

| Uptime SLA | 99.9–99.99% | 99.999% |

| Scale path | Relocate when you outgrow | 1 MW → 500 MW, same campus |

The West-East corridor: geography as a competitive advantage

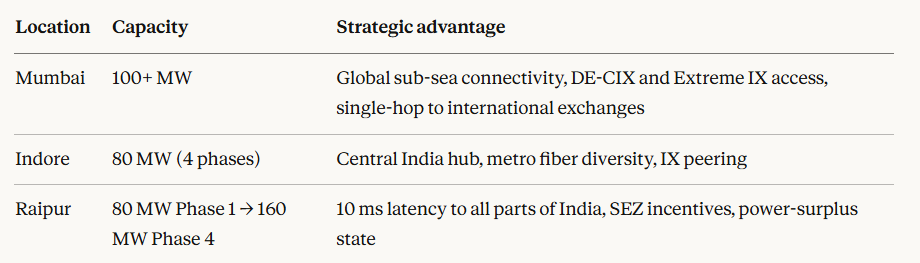

India’s AI infrastructure gap is partly a geography problem. Most existing datacenter capacity is concentrated in Mumbai and Chennai, creating latency disadvantages for the majority of India’s enterprise footprint. RackBank’s 300 MW+ West-East AI corridor is designed to close that gap.

The Raipur advantage deserves specific attention. Chhattisgarh is a power-surplus state, which matters at 80 to 160 MW draw. A 10 ms round-trip latency to all major Indian metros means inference workloads can run at Raipur with user-facing performance equivalent to a Mumbai deployment. And the SEZ status provides cost advantages that affect the total economics of running AI at scale.

No other Indian datacenter operator has built this kind of distributed, AI-specific, high-density corridor across Central and Western India simultaneously.

Colocation vs. cloud for AI workloads: the decision framework

Enterprise IT leaders and AI startup founders often face the same question: should we use managed GPU cloud, or co-locate our own hardware?

| Factor | Cloud GPU (managed) | High-density colocation |

|---|---|---|

| Hardware control | None | Full, including firmware and BIOS |

| Cost at scale | High at sustained 1,000+ GPU-hours/day | Significantly lower at sustained workloads |

| InfiniBand clustering | Limited or unavailable | Native InfiniBand for distributed training |

| Data sovereignty | Dependent on cloud provider policy | Full, within India, DPDP-compliant |

| CapEx timing | OpEx, pay-as-you-go | CapEx on hardware, OpEx on colo space |

| Scalability | On-demand, elastic | Planned expansion on same campus |

Data sovereignty is not a feature. It is a compliance requirement.

Under India’s Digital Personal Data Protection Act, data localisation requirements for certain categories of sensitive personal data are no longer optional. BFSI, healthcare, and government workloads increasingly require that data never leaves Indian jurisdiction.

Foreign cloud providers operating in India do not, by default, provide infrastructure-level sovereignty guarantees. RackBank does. All facilities are Indian-owned, Indian-operated, and fully DPDP-compliant.

Budget 2026-27 introduced a 20-year tax holiday for foreign cloud companies that use Indian data centers to serve global customers, effective until 2047. The facility must be notified by MeitY and operated by an Indian entity.

GigaCampus: scaling without relocation

One of the structural costs AI companies rarely account for is the cost of outgrowing their infrastructure and having to migrate. New facility contracts, requalification of hardware, reconfiguration of networking, updated compliance documentation. It is expensive and slow.

RackBank’s GigaCampus architecture eliminates this. A tenant starts at 1 MW and scales to 500 MW on the same campus, with no change in location, no re-architecture, and no disruption to running workloads. The campus supports up to 1 GW of IT load at full build-out across 100+ acres, with military-grade physical security and dual-source renewable energy.

Contract to commissioning in under 9 months. PUE of 1.3 guaranteed. ISO 27001, TIA-942 Rated 3, SOC 2 certified. The compliance documentation scales with you.

For enterprise IT heads evaluating 5-year infrastructure roadmaps, the GigaCampus model removes the largest variable: whether the facility can keep up with where the AI roadmap is going.

FAQs

It depends on power density and cooling. AI needs 80–150 kW per rack, which most legacy DCs can’t handle. RackBank’s AI Factories in Raipur & Indore are built for this, with liquid cooling and InfiniBand-ready infra.

Hyperscale = provider-owned cloud.

Colocation = your hardware in a DC.

For large-scale AI, colocation offers better cost control and flexibility.

Yes. Supports LLMs, RAG, RLHF, training, and inference with GPUs like H100, A100, GB200, and MI300X.

Yes. 100% India-based infrastructure with ISO 27001, SOC2, aligned with data localisation laws.

The colocation shift has already started

The enterprises winning on AI in India are not necessarily the ones with the best models. They are the ones that secured infrastructure capable of training and running those models without throttling, without compliance exposure, and without outgrowing their facility every 18 months.

Colocation used to be about renting a rack in a building with good connectivity and a diesel generator backup. That era is over. The question now is whether your infrastructure can sustain 150 kW per rack, run at PUE 1.3, guarantee data sovereignty, and scale from 1 MW to 500 MW without requiring you to move. RackBank’s 300 MW+ West-East AI Factory corridor, GigaCampus architecture, and certified sovereign infrastructure are built specifically for that question.